An object store allows to host large number of files. AWS S3 was the first hosted solution on the market, when it came to serve so called "buckets". Meanwhile a number of other service providers offer object stores, and usually you pay a fee for the size of your total data and egress, which is the outgoing traffic. However, none of them with acceptable conditions is located in the EU, which is a pre-condition to be fully GDPR compliant.

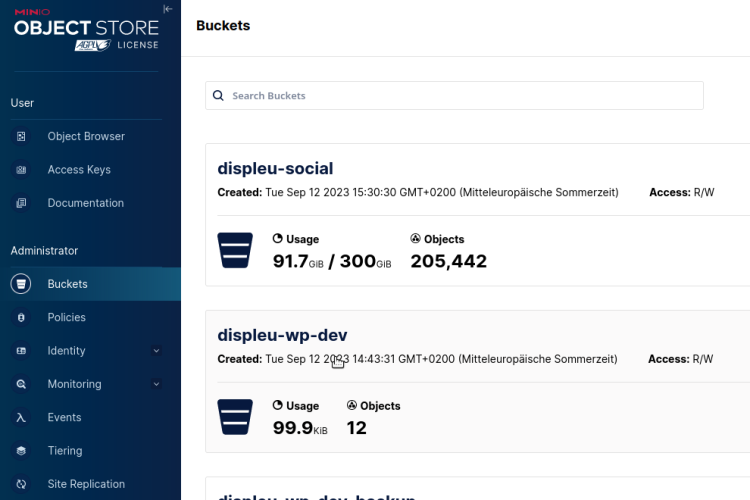

We had experimented with the open source solution Minio for a while and were quite happy with it. On serles server, it serves the files of our Mastodon server fairmove.net since two years. However, when it came to build a CDN for the Display Europe portal and video hub, we had to decide on extending or outsourcing our 20TB+ object store needs.

When we found out, that the replication mechanism of Minio allows to mirror S3 servers and thus would allow us to load balance traffic, we decided to do it ourselves. So we rented some decent hardware with 4x10TB discs and started to customize the ansible script of ricsanfre that would allow us to roll-out Minio servers as soon as we have to expand our capacity.

It turned out that several tasks are necessary. For setting up a new S3 minio server, not all steps are included in our script yet:

- install OS (can be activated in playbook)

- configure XFS drives

- nginx proxy

- ufw allow 443

- ufw allow 80

apt install certbot python3-certbot-nginxcertbot --nginx -d mountainname.fairkom.net(use a dedicated domain just for replication, makes it easier to remove replication at a later stage)- (copy certs from LE into /etc/minio/ssl when running ansible the first time - not needed with deploy hook below)

- run

ansible-playbook playbook.yml --ask-vault-pass - add /etc/letsencrypt/renewal-hooks/deploy/0000-minio-cert.sh

- check settings in /etc/minio/minio.conf

- configure minio client in .mc/config.json

- check if minio answers:

mc admin info minio02 - add caching folder

mkdir /var/cache/nginx

chown www-data /var/cache/nginx

chmod 700 /var/cache/nginx

- remove nginx logging if everything works for production

After half a year of development and testing, we finally migrated all buckets to the larger server falknis.

When reviewing the size of some buckets, we decided to suspend versioning for video or social meida assets. Versioning would allow us to go back to any state in history, but prohbits physical deletion. This made our Mastodon bucket 10 times larger as the actual media is using. Rolling-back those services is a rather unlikely scenario.

After all: it was more work as anticipated. With our own S3 storage provider, we built another corner stone for full data sovereignity, which plays well together with our own kubernetes cluster, which offers "more costly" CEPH volumes. We do not offer direct access to k8s or S3 hosting for customers (yet), but use it for our own and hosted services. But we are happy to share our knowledge and experience, when it comes to build similar infrastructures at other organisations.